We Believe AI Should Be For Everyone

Traditional AI is built around expensive equipment. GPU's that costs thousands just to start to play with functional AI. We have huge corporations building massive data centers and signing government contracts. We are being monitored, directed, controlled, and we're supposed to pay for it...

Nexus set out to even the playing field, now you can have real, usable AI without expensive equipment, today. This isn't a pipe dream this is the reality thanks to the Nexus AI system!

What makes Nexus special?

Nexus isn't just a wrapper. Nexus is an entirely new engine that allows current AI models to be used more effectively without needing expensive equipment.

Traditional AI works by sending history with every new prompt, so as your conversation grows, it takes longer, and longer, and longer, until it takes so long you have to start over. The data centers filled with GPU's can handle this extended reprocessing and throw away compute like it's going out of style, they charge us all monthly fees so they can do what they want. They trick the system, like having AI summarize the history, and that's why it tends to forget as the conversation goes on.

We flipped the script completely. When the model processed data there is generated knowledge value that builds up in memory, usually that is thrown away after each exchange, Nexus saves that data, that data is the model's memory. All the sudden we can continue conversations without having to reprocess the whole conversation every single time as it grows, we just hold the models memory, let it process the new information and all the sudden it is responding in seconds, even deep into a conversation with almost perfect memory recall! This isn't some trick like the online providers, this is natural behavior when we allow the model to retain memory!

On top of changing the way we use the model we also retain control over things like ram, so when you have a larger model, that's taking some time to process, the system can't just throw away memory your model needs! If you've used other local AI options you'll know there is a limit to what a model can successfully produce before it fails, and with Nexus if you have the ram, it will keep producing.

The Difference is Real

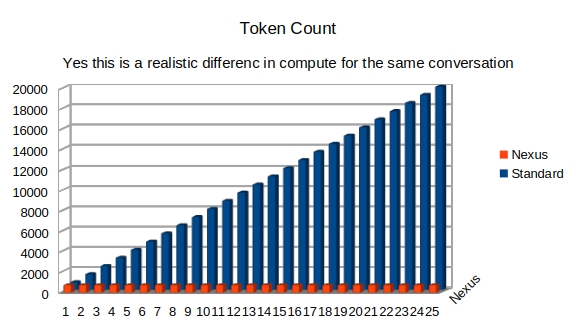

Because of the way nexus manages the models memory through a conversation, we don't just save a little bit of compute, when I said they were throwing compute away with standard AI, I am not exaggerating at all. (BTW token count is about 4 characters to 1 token, close enough to get the idea at least.. hopefully)

This chart is a realistic representation of a 25 message conversation building to a total of 20,000 tokens. With standard AI today, we literally send history with every single new prompt in the same conversation! In fact this example is nexus processing a total of 20,000 tokens, while standard AI has to process 260,000 tokens for the same exact conversation.

This is how Nexus is able to bring real, usable AI to real computers people already own, without needing expensive GPU's!

You don't have to worry about how secure your data is in transit, or how secure our servers are, you are in total control of your own data on your own computer.

I had a personal experience, I was working with a great AI model, and one day, they decided it was too dangerous and took it away from everyone. Not anymore.

You can actually run an AI model, save and load conversations directly from a USB flash drive. Keep your important conversations with you, plug it into a compatible computer and go!